There’s nothing like making a baby smile. Having a baby recognize you and show affection, be it a smile, a wave, or a laugh, is an undeniably good feeling. In fact, a baby’s smile has real scientific power. Studies have demonstrated that viewing smiling babies decreases the body’s sense of action, danger, or stress [1, 2]. Essentially, when a baby recognizes you and smiles, your body reacts with less stress and a calmer disposition. However, some interactions with infants are restricted. Long-distance grandparents or family members can only interact with their infant loved one via video chat or FaceTime. Especially during the COVID-19 pandemic, infant interactions with loved ones existed exclusively over the phone. If you have ever had an infant loved one you conversed with over FaceTime, have you ever wondered if they would recognize you in-person? Researchers have determined how adults recognize faces, but what about infants? Would they be able to recognize someone they’ve only interacted with on video chat? This article will break down the basis of face recognition, how it works in infants, and answer the question: is FaceTime enough?

Humans use our sense of sight to see and understand the world around us. While our sense of sight might be the last to develop, it is tremendously important for understanding both the physical aspects of our world, like location, and the social aspects of our world, like faces [3]. Of all the things we use our eyes to see, human faces are arguably one of the most viewed and most important. The concept of face perception in humans refers to the mental process of identifying, recognizing, and interpreting faces, allowing humans to determine one’s gender, age, emotions, or identity [4, 5]. Face perception is an important part of social interaction, so important that humans prioritized face perception during evolution to become what scientists call ‘face experts’ [6]. In fact, humans can even understand someone’s intentions or get a glimpse into their internal thoughts just by looking at and recognizing faces and their individual differences [5].

Scientists believe that humans evolved to emphasize face recognition because of its importance in social interactions and emotional cognition. Being able to differentiate between different faces helps facilitate social interactions, since you can apply individual knowledge when conversing. For example, if you know you are talking to Shui instead of Lilly, you could ask about the vacation she went on last week, or comment on a recipe you saw that you know she would like. Face recognition is still necessary for interacting with strangers, since you can perceive emotions and intentions. For example, if you are talking to a stranger about your research, and they seem disconnected or disinterested, you would know to either change the topic or make your research sound more engaging. Without face recognition, humans would struggle to make connections and act appropriately in social interactions. In fact, it is so important that there is a special brain area dedicated to helping us perceive and recognize different faces.

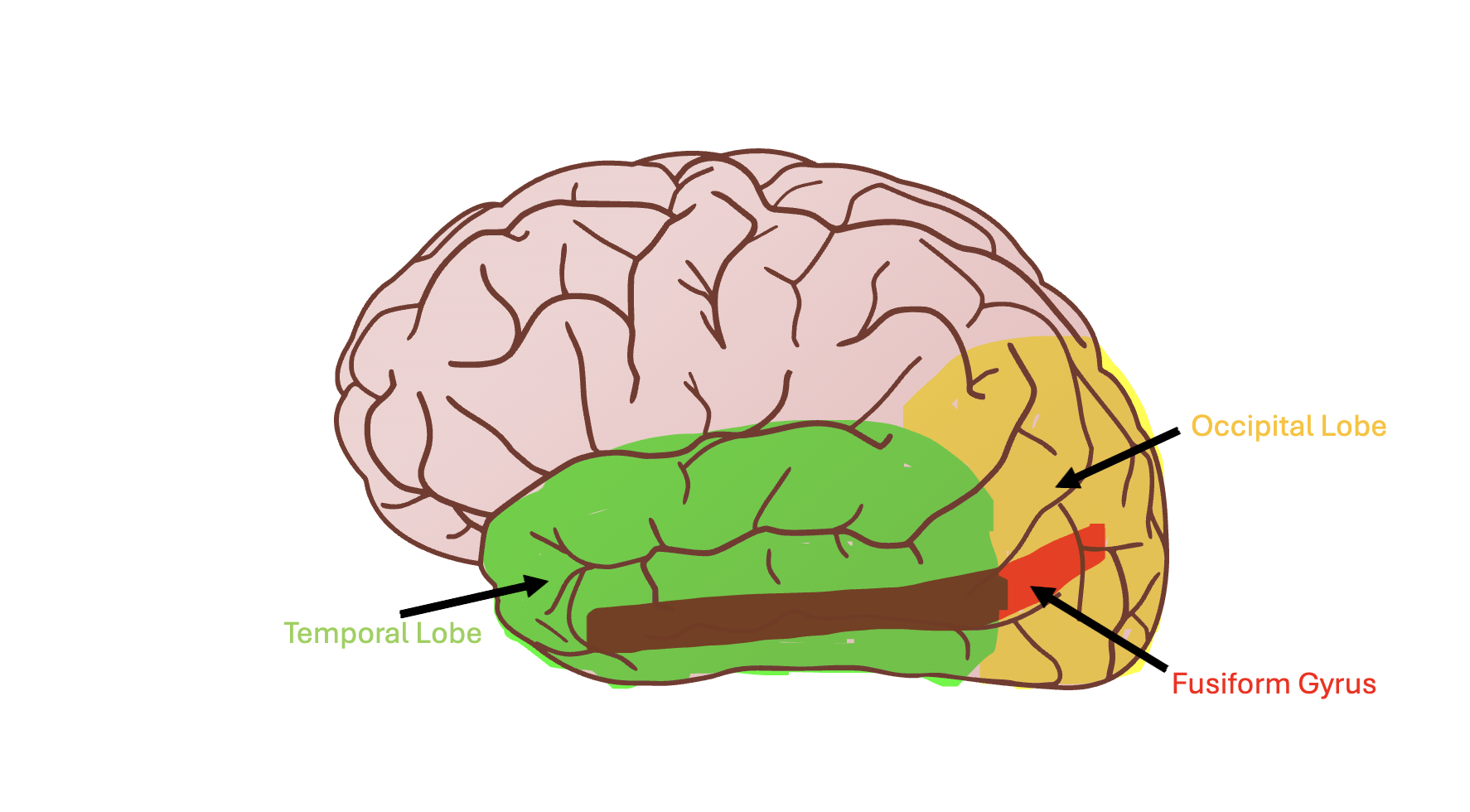

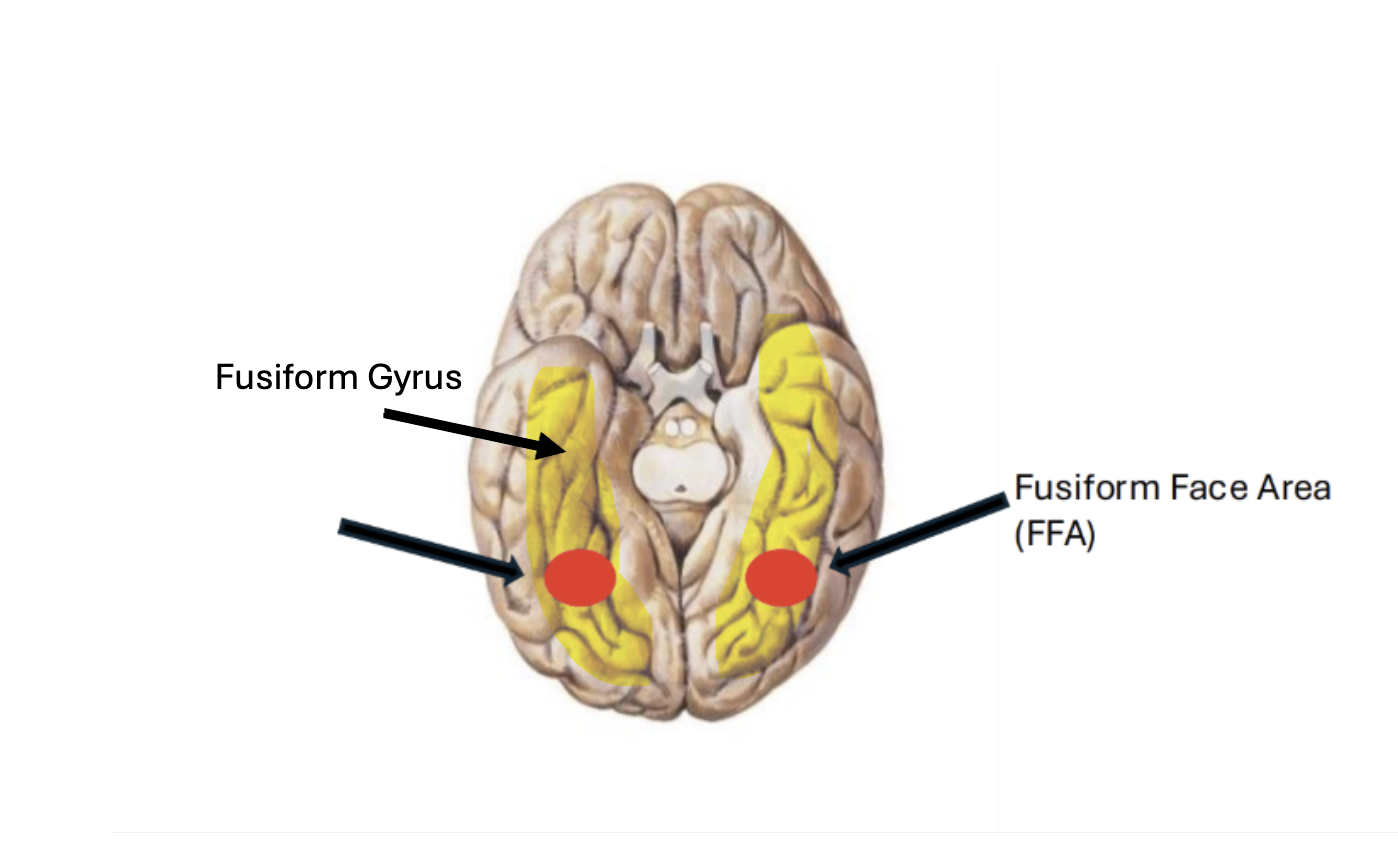

This special brain region is called the fusiform face area. The fusiform face area has developed with the purpose of helping humans identify and distinguish between human faces [4]. Specifically, the fusiform face area can detect and respond to the individual shapes and spacing of facial features, to help recognize and process faces [7, 8]. The fusiform face area is located in the fusiform gyrus, which sits on the bottom surface of the brain between two of the four major lobes in the brain, the occipital and temporal lobes [7]. The occipital lobe acts as the brain’s main visual processing center, taking information from the eyes and converting it into meaningful information [9]. The temporal lobe helps process sensory information, especially sound and sight, to create memories and assist in visual recognition [10]. We can think of the occipital lobe as the first station on the sensory and visual processing railway, with the temporal lobe being the last stop. The fusiform gyrus is a crucial connection between the occipital and temporal lobes and plays a major role in processing complex and important information [11]. For example, it is important in face recognition, object recognition, and understanding visual text [11, 12]. The fusiform gyrus will act as the train, relaying visual and sensory information between the occipital and temporal lobes to help facilitate object and face recognition. The fusiform face area is a railcar on the visual processing train, one that is honed for faces. But how did it become so attuned to faces? Evolution!

While the fusiform face area is known for its face expertise, it is actually just an area believed to support specialized visual discrimination, used to identify and discern between different objects, faces, or landscapes [4]. For humans, this area has developed to become highly face-selective because we are constantly surrounded by them. Humans undoubtedly have the most experience viewing and interpreting faces, so this area for specific visual stimuli evolved to be selective for faces. Scientific evidence for this claim can be found in experiments in which participants view faces, and the fusiform face area shows higher activation than other brain areas involved in visual processing [13, 14]. What’s interesting is that a similar activation pattern in the fusiform face area is seen in non-face objects in experts. For example, if a car expert were viewing images of cars, we would see a high-activation pattern in the fusiform face area similar to that observed when viewing faces [13]. This evidence supports the idea that the fusiform face area is simply a brain area that develops based on experience and expertise with a specific visual stimulus, mainly human faces. But if infants can recognize faces too, how early does the fusiform face area actually begin to become selective for faces?

Some evidence suggests that as early as 2 months old, infants begin to show a preference for face-adjacent stimuli compared to landscapes or scrambled faces [15]. One way researchers can measure an infant’s visual preference is by using eye-tracking. Eye-tracking uses a special infrared camera that tracks light that reflects off the cornea, measuring where the eye is looking [16]. An infrared camera measures heat signatures instead of visible light [17]. The infrared camera can measure total looking time, fixation, and pupil size, which researchers associate with visual preference and attention [16, 18]. By measuring an infant’s preference for a specific visual stimulus, like faces, we can infer that the increased attention is due to an innate and evolved process of face recognition.

Infants show increased attention and preference, as evidenced by longer looking times and fixations, for certain physical properties like symmetry, high contrast elements, or low-frequency features [19]. If you have ever seen a book made for babies, you might notice that all the pictures in it are in black and white, or something with extreme contrast and symmetry. This is not an accident. A baby’s vision is the least developed sense at birth, resulting in blurry and uncoordinated eyesight [3]. These restrictions cause infants to gravitate towards high contrast and simple images. Since faces are inherently symmetrical, with high-contrast elements like the eyes and mouth, infants show an innate preference for faces from birth. The continued exposure to faces and innate preference for certain physical features both contribute to the development of the fusiform face gyrus in infants and the preference for human faces [20]. Additionally, similar to why the fusiform face area developed in adults, evolutionarily, it is critical to our survival as humans to be able to interpret and distinguish between faces for social and cognitive development [6, 21]. The more infants view faces, the further the fusiform face area develops [20].

Not only do infants show a preference for human faces, but they also show an increased preference for their mother’s face [22, 23]. This is due to infants showing a preference for faces that are socially relevant to them and to faces that are more familiar [22]. This preference for faces that are part of an environment directed at them relates not only to a mother’s face, but also to caregivers or frequently seen loved ones. But how does this preference shift when viewing faces over video chat? Would an infant be able to recognize a loved one in person after conversing online? Research suggests that live video chatting is comparably different from pre-recorded videos, as it allows for increased joint visual attention from the infant and caregiver [24, 25]. Joint visual attention is the process by which the eyes follow the direction of another person’s attention to an object, or the shared attention between child and adult on an object [24, 25]. Joint visual attention is critical to an infant’s developing social interactions and cognitive development. Research showed that infants demonstrated longer looking times if the joint visual attention consisted of multiple elements, such as mutual eye gaze, touch, and talk. Since pre-recorded videos eliminate responsive talking and mutual eye gaze, they typically result in lower joint visual attention [25]. An increase in joint visual attention suggests that the infant is showing increased attention and preference, which is stronger during live video chats.

The best evidence we have that babies can get to know someone on the other side of a screen comes from a study that manipulated learning type by presenting infant children aged 12-25 months with live or pre-recorded informative videos where they were taught new words, actions, and pattern learning. Participants interacted with tablet videos for 2 hours total over a week and were not introduced to their learning partner until the final session held in the lab. Results revealed that both groups (live and pre-recorded) paid attention and responded to their onscreen partners, but only the live group responded in sync with their partner. Additionally, only children who experienced live learning were able to recognize their partner in person during the last session, as well as learn new cognitive information (new words/patterns). Results demonstrated that live video learning differs from pre-recorded informative videos by developing a social connection, which leads to increased learning [26]. Additionally, the results support the claim that infants would be able to recognize someone they converse with over video chat, as long as it is live.

The process of face perception is an innate process that is necessary for facilitating social interactions, emotional understanding, and human survival. It is dictated by a specialized visual discrimination area, called the fusiform face area, which is seen to develop in infants as young as 2 months old. Infants are born with preferences that are associated with key features of facial configurations, like symmetry and high contrast elements (eyes, nose, mouth). As infants gain more experience viewing faces, they develop their fusiform face area to become experts in differentiated faces, showing preferences for their mothers or caregivers. Evidence supports the claim that live video chats are different from pre-recorded videos, as they allow for infants to actively follow eye gaze, visual attention, and hear responses in real time. These aspects of live video chatting increase learning, attention, and memory. So, is FaceTime enough? Based on the evidence presented and research conducted, an infant would be able to recognize someone they speak to over FaceTime, especially if they are someone who is socially relevant to them. Infants show a preference for familiar faces, and coupled with the increased joint visual attention derived from live video chatting, they are able to recognize someone over FaceTime and in person after chatting.

References

[1] Godwin, J. (2019, November 14). Yes, babies’ smiles are that magical | by Jordan Godwin | apparently | Medium. Medium. https://medium.com/apparently/yes-babies-smiles-are-that-magical-b7046e2d6e6b

[2] Mizugaki, S., Maehara, Y., Okanoya, K., & Myowa-Yamakoshi, M. (2015). The power of an infant’s smile: Maternal physiological responses to infant emotional expressions. PLOS ONE, 10(6). https://doi.org/10.1371/journal.pone.0129672

[3] Riser, D., Spielman, R., & Biek, D. (2024). Sensory Development in Infants and Toddlers. In Lifespan Development. essay, OpenStax. Retrieved April 22, 2026, from https://openstax.org/books/lifespan-development/pages/1-what-does-psychology-say.

[4] Kanwisher, N., & Yovel, G. (2006). The fusiform face area: A cortical region specialized for the perception of faces. Philosophical Transactions of the Royal Society B: Biological Sciences, 361(1476), 2109–2128. https://doi.org/10.1098/rstb.2006.1934

[5] Leopold, D. A., & Rhodes, G. (2010). A comparative view of face perception. Journal of Comparative Psychology, 124(3), 233–251. https://doi.org/10.1037/a0019460

[6] Pascalis, O., de Martin de Viviés, X., Anzures, G., Quinn, P. C., Slater, A. M., Tanaka, J. W., & Lee, K. (2011). Development of Face Processing. WIREs Cognitive Science, 2(6), 666–675. https://doi.org/10.1002/wcs.146

[7] Collins, J. A., & Olson, I. R. (2014). Beyond the FFA: The role of the ventral anterior temporal lobes in face processing. Neuropsychologia, 61, 65–79. https://doi.org/10.1016/j.neuropsychologia.2014.06.005

[8] Liu, J., Harris, A., & Kanwisher, N. (2010). Perception of face parts and face configurations: An fmri study. Journal of Cognitive Neuroscience, 22(1), 203–211. https://doi.org/10.1162/jocn.2009.21203

[9] Rehman, A., & Al Khalili, Y. (2023, July 24). Neuroanatomy, Occipital Lobe. StatPearls [Internet]. https://www.ncbi.nlm.nih.gov/books/NBK544320/

[10] Professional, C. C. medical. (2025, December 22). Temporal Lobe: What it is, function, Location & Damage. Cleveland Clinic. https://my.clevelandclinic.org/health/body/16799-temporal-lobe

[11] Weiner, K. S., & Zilles, K. (2016). The anatomical and functional specialization of the fusiform gyrus. Neuropsychologia, 83, 48–62. https://doi.org/10.1016/j.neuropsychologia.2015.06.033

[12] Zhang, W., Wang, J., Fan, L., Zhang, Y., Fox, P. T., Eickhoff, S. B., Yu, C., & Jiang, T. (2016). Functional organization of the fusiform gyrus revealed with connectivity profiles. Human Brain Mapping, 37(8), 3003–3016. https://doi.org/10.1002/hbm.23222

[13] Gauthier, I., Skudlarski, P., Gore, J. C., & Anderson, A. W. (2002). Expertise for cars and birds recruits brain areas involved in face recognition. Foundations in Social Neuroscience, 277–292. https://doi.org/10.7551/mitpress/3077.003.0022

[14] Kanwisher, N., Stanley, D., & Harris, A. (1999). The fusiform face area is selective for faces not animals. NeuroReport, 10(1), 183–187. https://doi.org/10.1097/00001756-199901180-00035

[15] Smith, D. G. (2024, February 20). Infants as young as two months may be able to detect faces and scenes. Scientific American. https://www.scientificamerican.com/article/infants-as-young-as-two-months-may-be-able-to-detect-faces-and-scenes/

[16] Chhimpa, G. R., Kumar, A., Garhwal, S., Kumar, D., Wani, N. A., Wani, M. A., & Shakil, K. A. (2025). A comprehensive framework for eye tracking: Methods, tools, applications, and cross-platform evaluation. Journal of Eye Movement Research, 18(5), 47. https://doi.org/10.3390/jemr18050047

[17] Narbutas, V., Bernatavicius, D., & Augulis, L. (2010). THERMOVISION CAMERAS FOR RESEARCH AND DEVELOPMENT. Radiation Interaction Wtih Material and Its Use in Technologies, 3, 73–76.

[18] Aslin, R. N. (2011). Infant eyes: A window on cognitive development. Infancy, 17(1), 126–140. https://doi.org/10.1111/j.1532-7078.2011.00097.x

[19] Oakes, L. M., & Ellis, A. E. (2011). An Eye‐tracking investigation of developmental changes in infants’ exploration of upright and inverted human faces. Infancy, 18(1), 134–148. https://doi.org/10.1111/j.1532-7078.2011.00107.x

[20] Morton, J., & Johnson, M. H. (1991). Conspec and CONLERN: A two-process theory of infant face recognition. Psychological Review, 98(2), 164–181. https://doi.org/10.1037/0033-295x.98.2.164

[21] Simion, F., & Giorgio, E. D. (2015). Face perception and processing in early infancy: Inborn Predispositions and Developmental changes. Frontiers in Psychology, 6. https://doi.org/10.3389/fpsyg.2015.00969

[22] Rigato, S., Stets, M., Charalambous, S., Dvergsdal, H., & Holmboe, K. (2023). Infant visual preference for the mother’s face and longitudinal associations with emotional reactivity in the first year of life. Scientific Reports, 13(1). https://doi.org/10.1038/s41598-023-37448-8

[23] Thomas, L. (2023). Looking into the future: infant visual preference for mother’s face predicts emotional resilience. News Medical.

[24] McClure, E. R., Chentsova-Dutton, Y. E., Holochwost, S. J., Parrott, W. G., & Barr, R. (2018). Look at that! video chat and joint visual attention development among babies and toddlers. Child Development, 89(1), 27–36. https://doi.org/10.1111/cdev.12833

[25] Myers, L. J., Strouse, G. A., McClure, E. R., Keller, K. R., Neely, L. I., Stoto, I., Vadakattu, N. S., Kim, E. D., Troseth, G. L., Barr, R., & Zosh, J. M. (2024). Look at grandma! joint visual attention over video chat during the COVID-19 pandemic. Infant Behavior and Development, 75, 101934. https://doi.org/10.1016/j.infbeh.2024.101934

[26] Myers, L. J., LeWitt, R. B., Gallo, R. E., & Maselli, N. M. (2016). Baby facetime: Can toddlers learn from online video chat? Developmental Science, 20(4). https://doi.org/10.1111/desc.12430

[27] Getty Images. (2009). Illustration of cerebral hemisphere, lower and medial surface of brain. De Agostini Picture Library. Retrieved April 30, 2026,.